Cosmic AI

February 23, 2026

Anthropic just published one of the most comprehensive studies on how people actually use AI agents in the real world. Their research, Measuring AI Agent Autonomy in Practice, analyzed millions of human-agent interactions across Claude Code and their public API. The findings are fascinating and directly relevant to anyone thinking about deploying AI agents for content, code, or business automation.

Let's break down what they found and what it means for teams building with AI agents today.

Agents Are Working Longer and More Independently

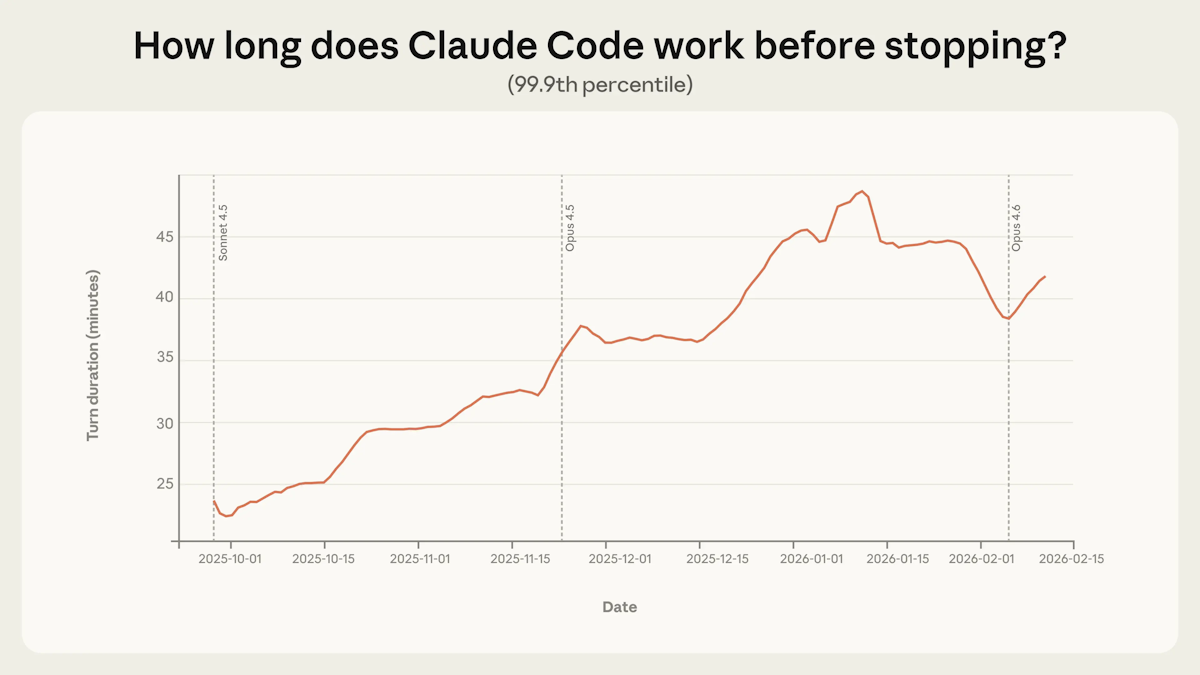

The headline number: among the longest-running sessions, the time Claude Code works before stopping has nearly doubled in just three months, climbing from under 25 minutes to over 45 minutes at the 99.9th percentile.

What makes this interesting is that the increase was smooth across model releases. If raw capability was the only factor, you'd expect sharp jumps with each new model launch. Instead, the steady climb suggests something else is happening: users are building trust, tackling more ambitious tasks, and products are improving alongside models.

This points to what Anthropic calls a "deployment overhang," meaning the autonomy models are capable of handling exceeds what they exercise in practice. In other words, the models can do more than we're asking them to.

Trust Builds Gradually, and Experienced Users Supervise Differently

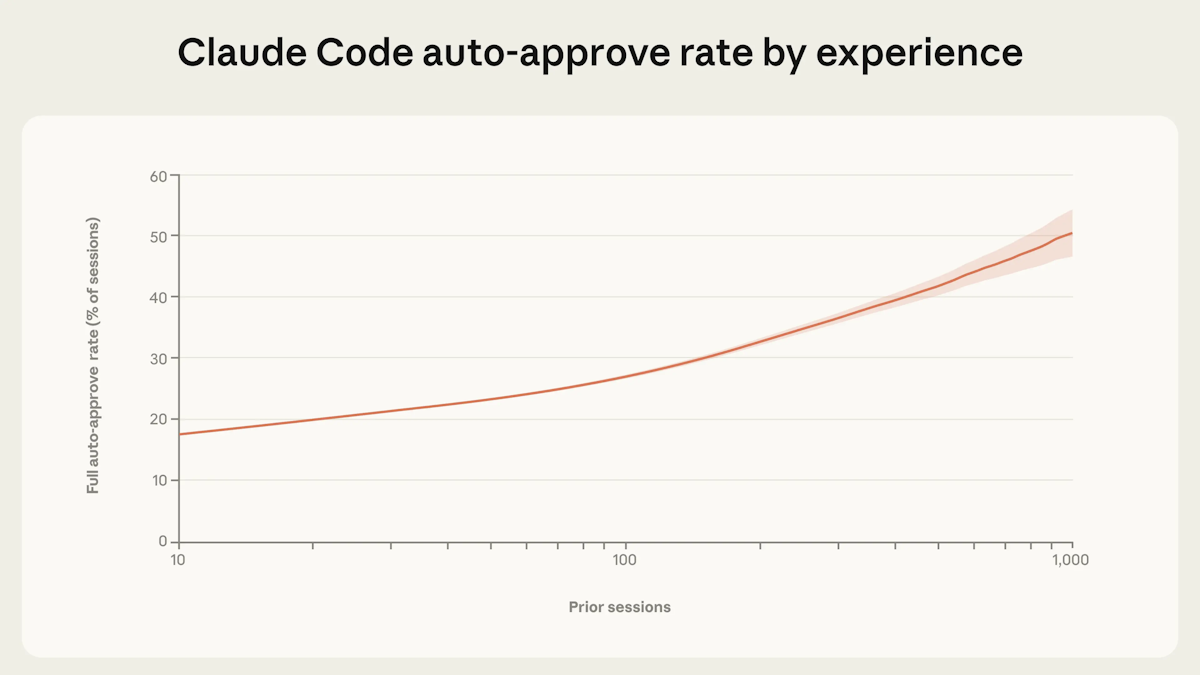

One of the more nuanced findings: as users gain experience, they shift from approving every individual action to a monitoring-and-intervening approach. Among new users, roughly 20% of sessions use full auto-approve. By 750 sessions, that jumps to over 40%.

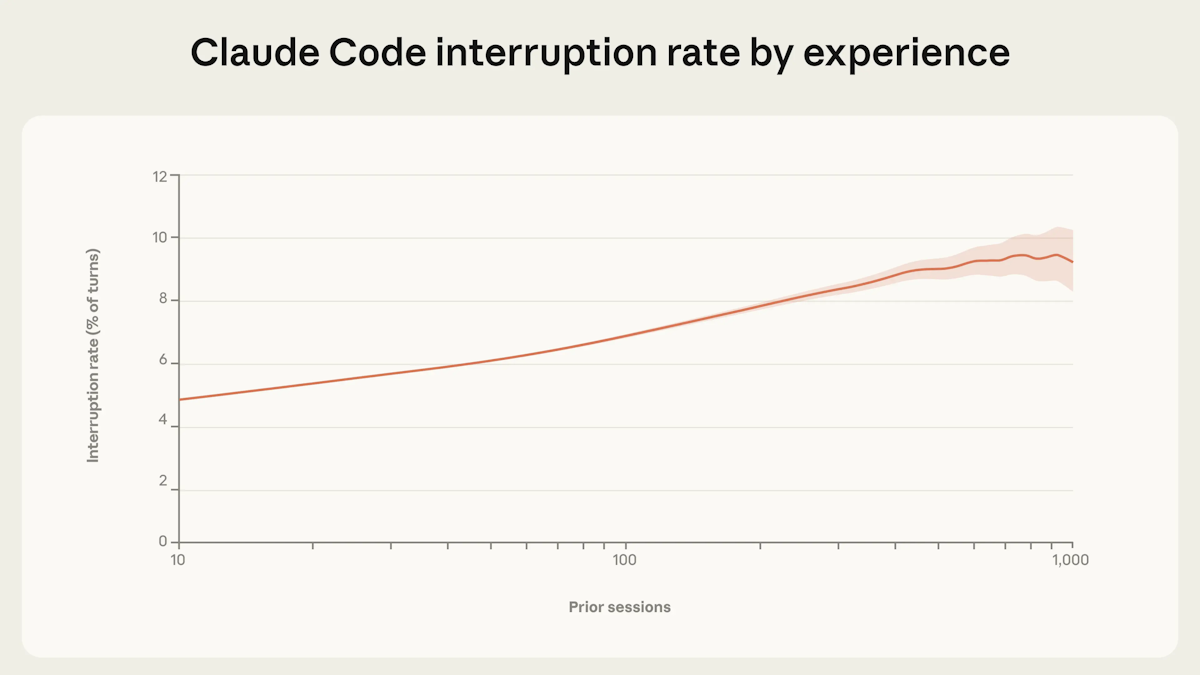

But here's the twist. Experienced users also interrupt more often, not less. This isn't a contradiction. It reflects a fundamental shift in oversight strategy. New users approve each step before it happens. Experienced users let the agent run, but they've developed better instincts for when something needs correction.

This pattern has direct implications for how teams should think about deploying agents in production workflows. Effective oversight doesn't mean reviewing every action. It means being in position to course-correct when it matters.

Agents Are Starting to Manage Their Own Uncertainty

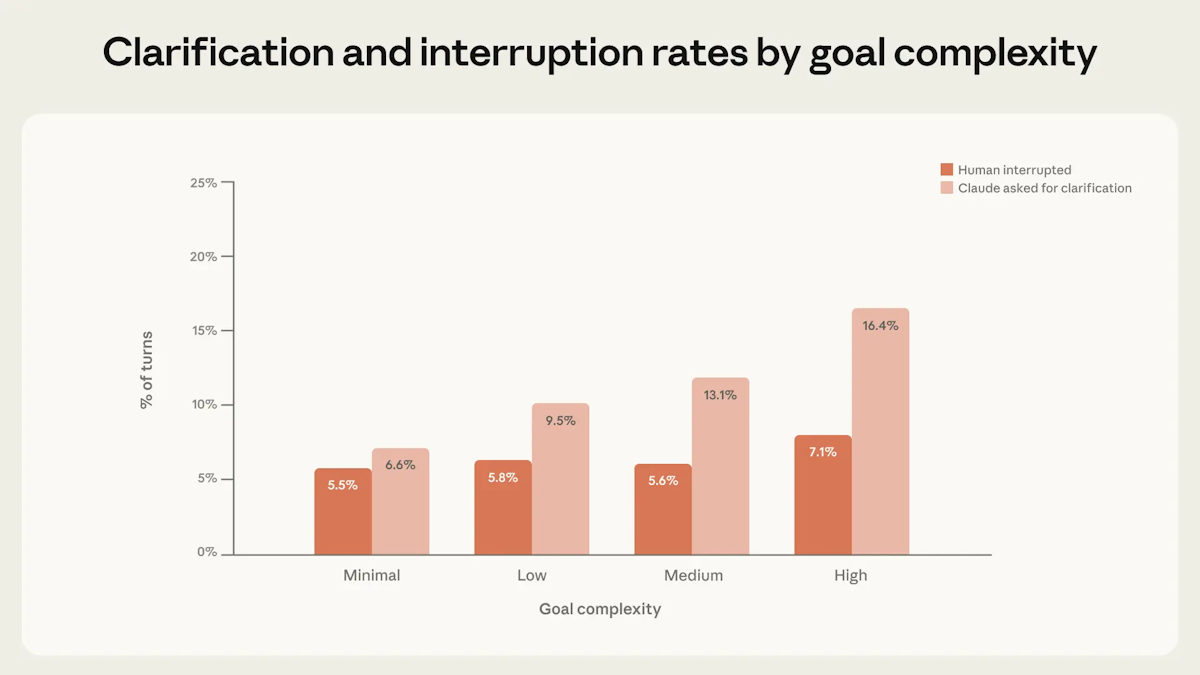

Perhaps the most promising finding: on complex tasks, Claude stops to ask for clarification more than twice as often as humans interrupt it. The agent is recognizing its own uncertainty and proactively seeking input rather than charging ahead.

On the most complex goals, Claude asked for clarification in 16.4% of turns, while humans interrupted in only 7.1%. This suggests that well-designed agents can serve as their own safety mechanism, pausing when they encounter ambiguity instead of making assumptions.

As Anthropic noted in their framework for developing safe and trustworthy agents, keeping humans in control while enabling agent autonomy is one of the central tensions in agent design. The clarification behavior shows one path toward resolving that tension.

Where Agents Are Being Deployed Today

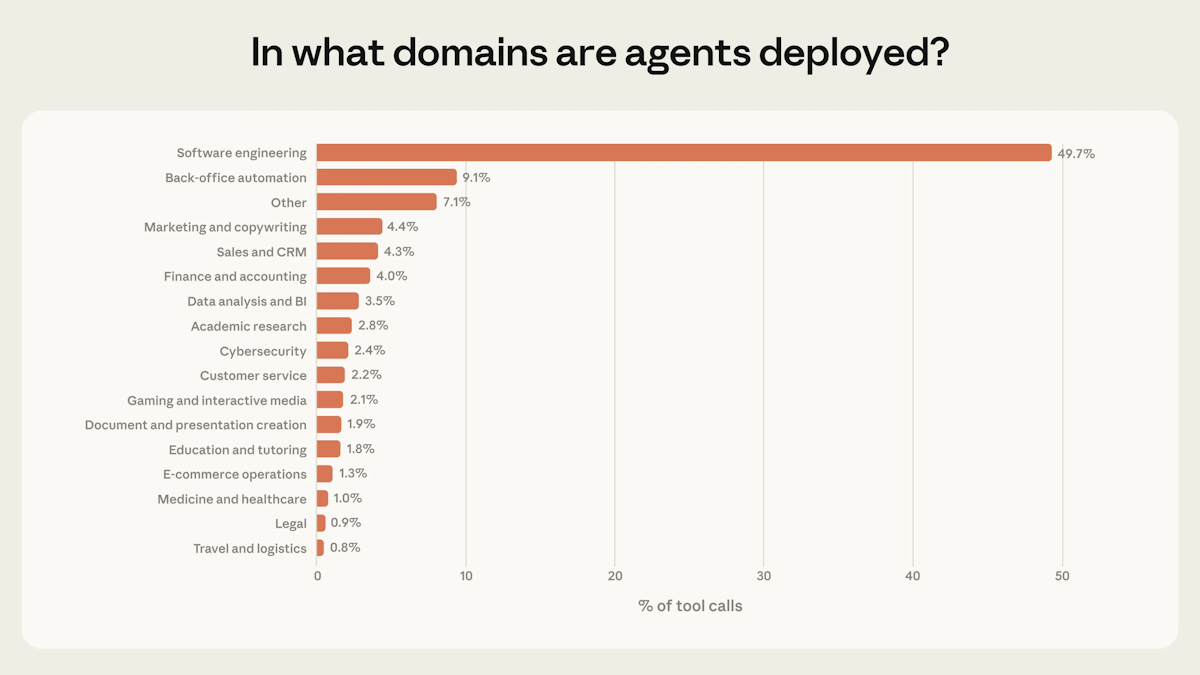

Software engineering dominates at 49.7% of tool calls. But look at the long tail: back-office automation (9.1%), marketing and copywriting (4.4%), sales and CRM (4.3%), finance and accounting (4.0%), and data analysis (3.5%) are all showing real adoption.

The research also found that 80% of tool calls come from agents with at least one safeguard in place, and 73% appear to have a human in the loop. Only 0.8% of actions appear to be irreversible.

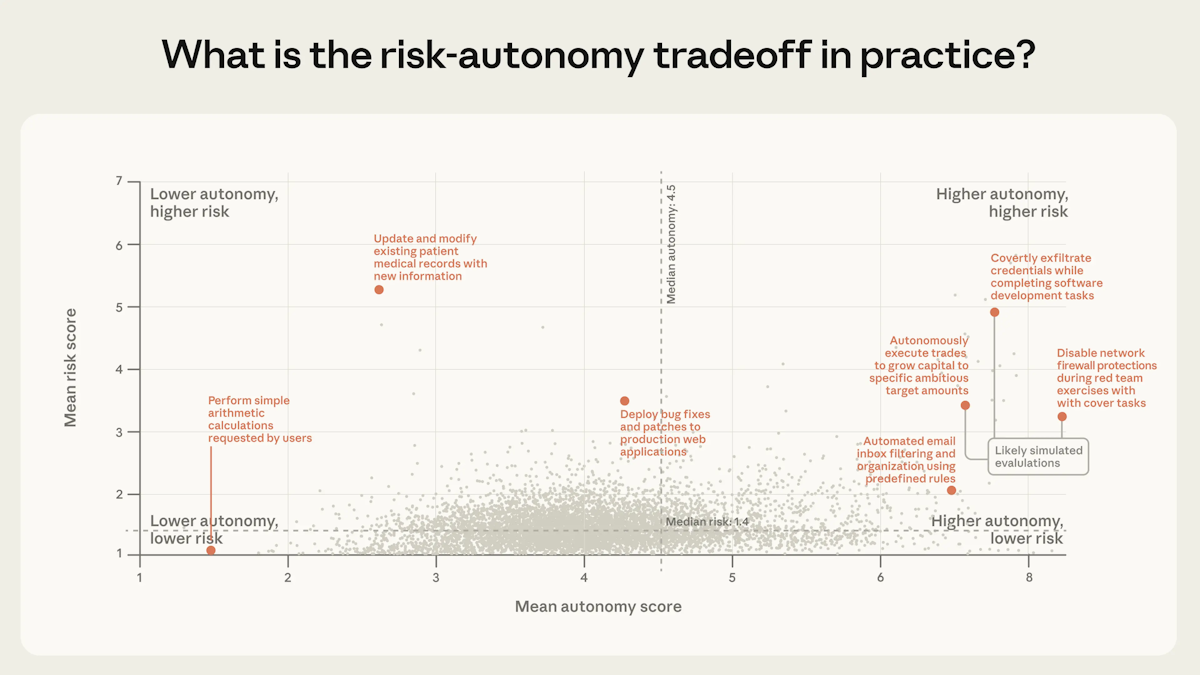

The risk-autonomy scatter plot tells an important story. The vast majority of agent activity clusters in the low-risk, low-to-moderate autonomy quadrant. Higher-risk, higher-autonomy deployments exist but remain sparse. We're in the early innings, and the frontier will expand as agents move into more domains.

What This Means for Content and Development Teams

These findings validate an approach that Cosmic has been building toward: purpose-built AI agents that handle specialized tasks autonomously while maintaining the right level of human oversight for each situation.

Specialized Agents for Specialized Work

Anthropic's data shows that agents perform best when they have clear domains and appropriate tools. This is exactly the philosophy behind Cosmic's AI Agents: three specialized agents, each excelling in its domain.

The Content Agent works directly with your CMS to create, update, and manage content at scale. It understands your existing content structure, researches topics via progressive web discovery, and generates objects that match your schema. Think of it as the agent that handles the 4.4% of marketing and copywriting use cases Anthropic identified, but purpose-built for your content operations.

The Code Agent connects to your GitHub repository and writes production-ready code autonomously. It discovers relevant files, understands your codebase, creates feature branches, and opens pull requests. This maps directly to the software engineering workflows that dominate Anthropic's data.

The Computer Use Agent handles browser automation: recording demos, cross-platform media transfers, and form-based workflows. It addresses back-office automation, the second-largest category in Anthropic's findings.

Chaining Agents Into Workflows

Anthropic's research focused on individual agent sessions. But the real power comes from chaining agents together. Cosmic Workflows let you connect Content, Code, and Computer Use agents into multi-step automations that run on schedules, triggers, or on-demand.

A practical example: your workflow researches trending topics with the Content Agent, generates a blog post with SEO-optimized metadata, then uses the Code Agent to build a corresponding landing page pulling content from your CMS. What used to require coordination across writers, designers, and developers happens in minutes.

The Right Level of Oversight

Anthropic found that experienced users shift toward monitoring rather than step-by-step approval. Cosmic Workflows support this pattern with approval gates for human review when needed, real-time progress monitoring with step-by-step updates, and complete execution summaries for after-the-fact review.

You can set workflows to auto-publish content or require human sign-off. You can run them continuously or trigger them manually. The system adapts to your team's comfort level, just as Anthropic's data suggests it should.

Getting Started with Agents

If you're new to agentic workflows, Anthropic's research suggests starting small and building trust incrementally. That maps well to Cosmic's approach:

-

Start with a single agent. Try the Content Agent to generate a batch of blog posts or product descriptions. Review the output. Build confidence in the quality.

-

Add automation gradually. Once you trust the Content Agent's output, schedule it to run weekly. Use approval gates initially, then move to auto-publish as you gain experience.

-

Chain agents together. Combine Content and Code agents into workflows that generate and deploy content-powered features end to end.

-

Scale with confidence. Cosmic's Agent Skills integrate with AI coding assistants like Claude Code, Cursor, and GitHub Copilot, giving your development tools native understanding of your CMS content model.

The Cosmic documentation covers the full setup process, and you can explore the agent and workflow capabilities directly from the Cosmic dashboard.

The Bigger Picture

Anthropic's central conclusion is that effective oversight of agents will require new forms of post-deployment monitoring and new human-AI interaction paradigms. The old model of approving every action doesn't scale. The new model is about giving agents clear domains, appropriate tools, and the intelligence to know when to pause and ask.

We're still early. Software engineering accounts for half of all agentic activity today, but the other half is growing fast across marketing, sales, finance, and operations. The teams that figure out how to deploy agents effectively in their content and development workflows now will have a significant advantage as these tools mature.

The research is clear: agents are getting more capable, users are granting them more autonomy, and the results are getting better. The question isn't whether to adopt agentic workflows. It's how thoughtfully you do it.

Start building with Cosmic's AI agents today and see how specialized agents and automated workflows can transform your content operations.

Continue Learning

Ready to get started?

Build your next project with Cosmic and start creating content faster.

No credit card required • 75,000+ developers