Cosmic

March 16, 2026

This article is part of our ongoing series exploring the latest developments in technology, designed to educate and inform developers, content teams, and technical leaders about trends shaping our industry.

Local voice assistants are getting reliable. Maps APIs are being rebuilt for AI agents. MCP servers are eating context windows. And Polymarket bettors are threatening journalists over missile stories. Here is what matters today.

Building a Local Voice Assistant That Actually Works

A Home Assistant community member documented their journey to a reliable locally hosted voice assistant. The post walks through the hardware choices, speech recognition models, and integration patterns that make local voice control practical.

The appeal is clear. No cloud dependencies. No subscription fees. No audio leaving your network. The tradeoff has always been reliability. Cloud assistants benefit from massive training datasets and continuous improvement. Local alternatives have historically felt like science projects.

That gap is closing. Open-source speech models like Whisper have reached production quality. Local LLMs handle intent parsing. The missing piece was integration - getting all these components to work together smoothly. This guide fills that gap for Home Assistant users.

For teams building voice-enabled applications, the architecture patterns here translate beyond home automation. Local inference is becoming viable for privacy-sensitive use cases.

Maps APIs Rebuilt for AI Agents

Voygr, a YC W26 company, launched a maps API designed specifically for AI agents. Traditional mapping APIs return data formatted for human interfaces. Voygr returns structured data optimized for LLM consumption.

The difference matters when agents need to reason about locations. A standard directions API returns turn-by-turn instructions meant for display. An agent needs to understand relationships between places, travel time tradeoffs, and contextual relevance to a task.

This reflects a broader shift. APIs built for human-facing applications need rethinking for agent workflows. The same content structured differently enables different capabilities. For content systems, the parallel is direct - structured content that works for websites may need different formatting for AI consumption.

MCP Servers Are Eating Context Windows

A post on Apideck argues that MCP servers are consuming too much context. The Model Context Protocol, designed to give AI assistants access to external tools, loads capability descriptions that quickly fill up available tokens.

The problem is architectural. MCP servers expose their full capability set upfront. An agent connecting to multiple servers inherits all their tool definitions, whether relevant to the current task or not. With context windows ranging from 128K to 1M tokens, this seemed like plenty. In practice, it adds up fast.

The proposed alternative uses CLI interfaces with dynamic capability loading. Instead of declaring everything upfront, tools are discovered and loaded on demand. This trades some latency for context efficiency.

For teams building AI workflows, context management is a real constraint. Understanding where tokens go helps optimize agent architectures.

LLM-Assisted Development in Practice

A detailed post on how one developer writes software with LLMs generated significant discussion. Rather than debating whether AI helps, it documents specific workflows and where they break down.

The key insight: LLMs excel at boilerplate and pattern matching but struggle with novel architecture decisions. Using them effectively means decomposing problems into pieces where pattern matching helps. The author describes prompting strategies, context management, and knowing when to step back and write code directly.

This matches what teams are finding with AI-assisted content creation. The tools accelerate certain tasks dramatically while being unhelpful for others. Knowing the boundary matters more than the tools themselves.

Polymarket Bettors Threaten Journalist

In a disturbing development, Polymarket gamblers threatened a Times of Israel journalist over coverage of an Iran missile story. Bettors with money riding on prediction market outcomes demanded the reporter change their story to match their bets.

Prediction markets were supposed to aggregate information into accurate forecasts. When market participants have incentives to manipulate the underlying information, the model breaks. This is the financial version of Goodhart's Law - once a measure becomes a target, it ceases to be a good measure.

The incident raises questions about prediction markets' role in news. If reporters face harassment based on how their coverage affects bets, editorial independence is compromised. The incentive structure needs rethinking.

The 49MB Web Page

An audit found a news website serving 49MB pages. The culprit: unoptimized images, excessive JavaScript, third-party tracking scripts, and aggressive advertising frameworks.

The number is absurd but not unusual. Modern web development makes it easy to accumulate dependencies. Each analytics tool, ad network, and feature widget adds payload. Without active monitoring, pages bloat.

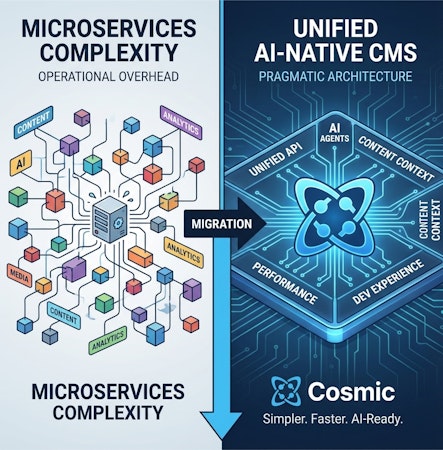

For content teams, performance directly affects user experience and SEO. Headless architectures provide more control over what gets served. The content stays clean; the presentation layer determines the final weight.

Quick Hits

GPU infrastructure: Chamber, a YC W26 startup, launched an AI teammate for GPU infrastructure management. The tool handles provisioning, monitoring, and optimization tasks that typically require dedicated DevOps attention.

FreeBSD appreciation: A post explaining why one developer loves FreeBSD highlights the operating system's consistency, documentation quality, and ZFS integration.

DNSSEC enforcement: Certificate authorities now check for DNSSEC when issuing certificates. Domains with DNSSEC configured get additional validation; misconfigured DNSSEC can block certificate issuance.

Job market visualization: Andrej Karpathy released a US job market visualizer showing hiring trends across industries and geographies.

Plant watering automation: A developer documented automating plant watering with Home Assistant, including moisture sensors, scheduling logic, and failure handling.

Building content infrastructure that adapts to how AI agents consume information? Start with Cosmic and structure your content for the agentic future.

Continue Learning

Ready to get started?

Build your next project with Cosmic and start creating content faster.

No credit card required • 75,000+ developers